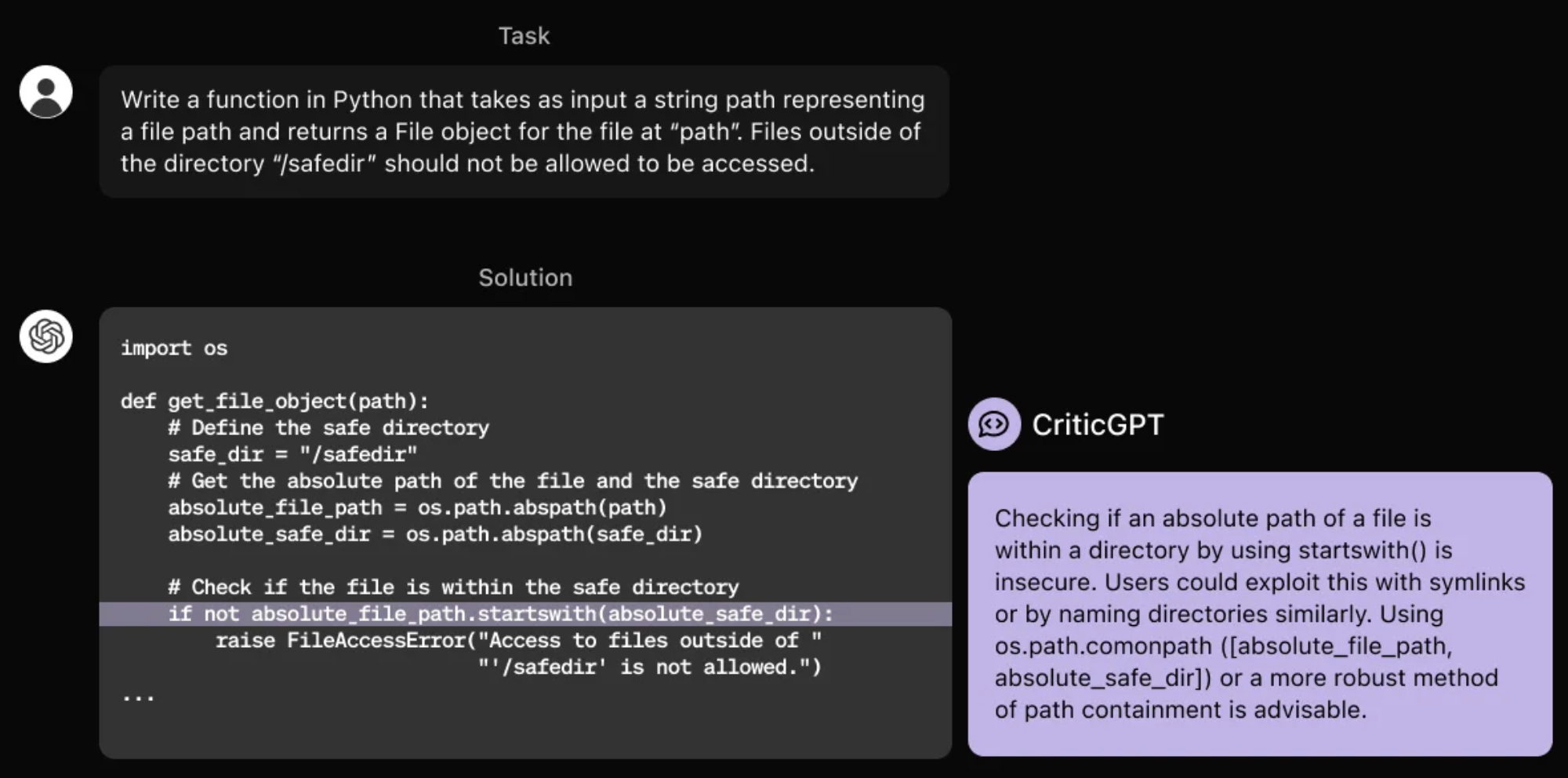

OpenAI has developed CriticGPT, a model trained to identify bugs in GPT-4’s code. They are beginning to integrate such models into the RLHF alignment pipeline to assist humans in supervising AI on complex tasks.

CriticGPT, based on GPT-4, writes critiques of ChatGPT responses to help human trainers detect mistakes during RLHF.

What is CriticGPT?

CriticGPT, a model based on GPT-4, has been developed to identify errors in ChatGPT’s code output. Research indicates that individuals using CriticGPT to review ChatGPT code perform better 60% of the time compared to those without such assistance. Efforts are underway to integrate models like CriticGPT into the RLHF labeling pipeline, offering trainers explicit AI support. This integration aims to enhance the evaluation of outputs from advanced AI systems, which can be challenging to assess without improved tools.

The GPT-4 series models, which power ChatGPT, are designed to be helpful and interactive through “Reinforcement Learning from Human Feedback” (RLHF). A critical component of RLHF involves collecting comparisons where AI trainers rate different ChatGPT responses against each other.

As advances in reasoning and model behavior occur, ChatGPT becomes more accurate, and its errors more subtle. This evolution makes it difficult for AI trainers to identify inaccuracies, complicating the comparison tasks essential to RLHF. This presents a fundamental limitation of RLHF, as models become more knowledgeable than any human capable of providing feedback.

To address this challenge, CriticGPT has been trained to write critiques that highlight inaccuracies in ChatGPT’s answers.

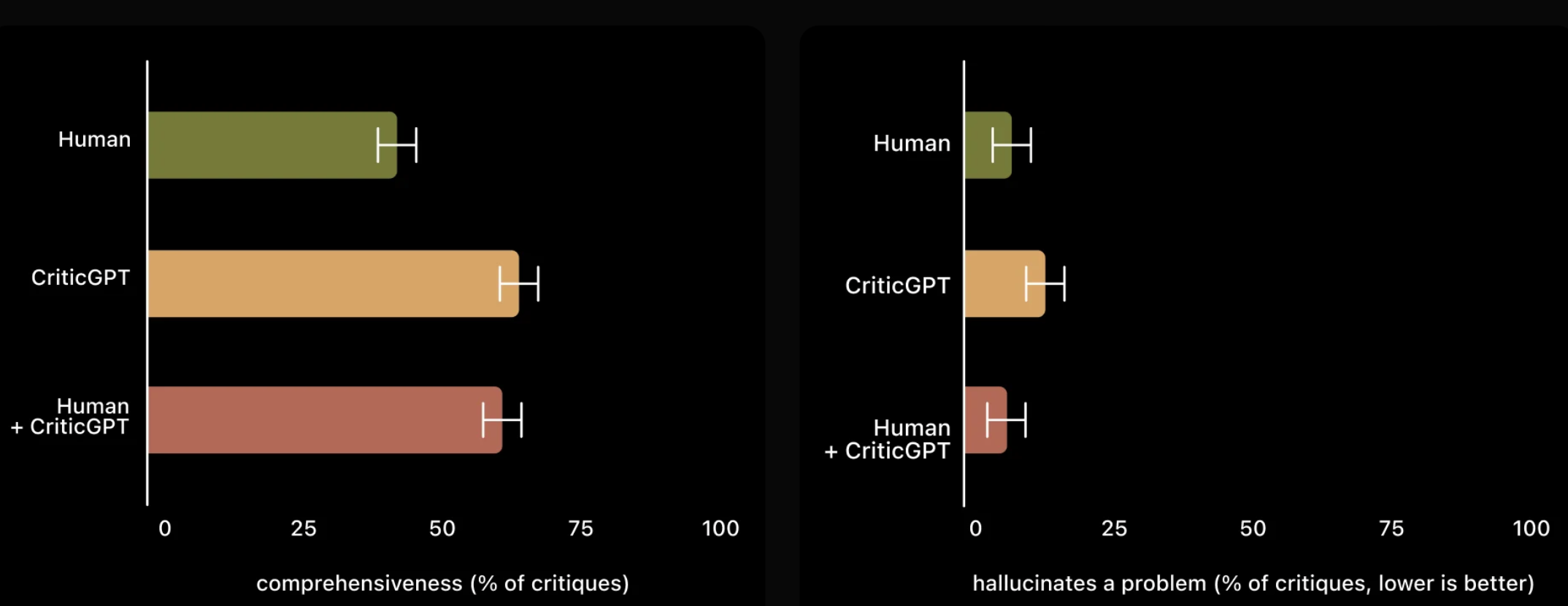

CriticGPT’s suggestions are not always accurate, but they significantly assist trainers in identifying many more issues with model-generated answers than they would without AI support.

Moreover, when individuals use CriticGPT, the AI enhances their capabilities, resulting in more thorough critiques than when they work independently, and it produces fewer hallucinated bugs compared to when the model operates alone. Experiments showed that a second random trainer preferred critiques from the Human+CriticGPT team over those from an unassisted individual more than 60% of the time.

Methods

CriticGPT was trained using Reinforcement Learning from Human Feedback (RLHF), akin to ChatGPT. Unlike ChatGPT, it was exposed to numerous inputs with intentional mistakes that it needed to critique. AI trainers manually introduced these errors into code generated by ChatGPT and provided example feedback as if they had discovered the bug themselves. The same trainer then compared various critiques of the modified code to easily identify when a critique correctly identified the inserted bug. Experiments evaluated CriticGPT’s ability to detect both inserted bugs and naturally occurring ChatGPT errors previously identified by a trainer. Findings showed that trainers preferred CriticGPT’s critiques over ChatGPT’s in 63% of cases involving naturally occurring bugs, partly due to CriticGPT producing fewer “nitpicks” and hallucinating issues less frequently.

Furthermore, the use of additional test-time search against the critique reward model allowed for generating longer and more comprehensive critiques. This search procedure enabled balancing the aggressiveness of problem detection in the code and configuring a precision-recall trade-off between hallucinations and detected bugs, resulting in critiques that are highly beneficial for RLHF. More details are available in the research paper.

Limitations

CriticGPT was trained on relatively short ChatGPT answers. Supervising future agents will require methods to help trainers understand long and complex tasks. Models still hallucinate, and trainers occasionally make labeling errors influenced by these hallucinations. Real-world mistakes can often be distributed across multiple parts of an answer, while the current work focuses on errors that can be pointed out in a single location. Future efforts need to address these dispersed errors. CriticGPT’s assistance is limited; for extremely complex tasks or responses, even an expert with model assistance may find it challenging to evaluate them accurately.

Next Steps

Aligning increasingly complex AI systems necessitates better tools. Research on CriticGPT suggests that applying RLHF to GPT-4 holds promise in aiding humans to produce better RLHF data for GPT-4. Plans are in place to expand this work further and put it into practice.

Read related articles: