Text in GPT and embeddings models is processed in segments known as tokens. These tokens symbolize frequently appearing character sequences. To illustrate, the phrase “tokenization” is broken down into “token” and “ization”, whereas a brief and common word such as “the” is expressed as one token. It’s noteworthy that in a sentence, the first token of every word usually initiates with a space character. You can utilize OpenAI’s tokenizer tool to examine specific strings and see their token translation. Roughly, 1 token equals around 4 characters or 0.75 words in English text.

An important constraint to remember is that for a GPT model, the combined prompt and output generated should not exceed the model’s maximum context length. As for embeddings models (which don’t produce tokens), the input must be less than the model’s maximum context length. The maximum context lengths for all GPT and embeddings models are listed in the model index.

GPT Tiktoken is a fast Byte pair encoding tokeniser for use with OpenAI’s models. You can download the Python library on GitHub:

import tiktoken

enc = tiktoken.get_encoding("cl100k_base")

assert enc.decode(enc.encode("hello world")) == "hello world"

enc = tiktoken.encoding_for_model("gpt-4")

The open source edition of tiktoken is available for installation from PyPI using the following command:

pip install tiktoken

The documentation for the tokenizer API can be found in tiktoken/core.py.

You can find sample code utilizing tiktoken in the OpenAI Cookbook.

What is Byte pair encoding?

GPT Models perceive text differently from how we do – they interpret it as a series of numbers, known as tokens. A method called Byte Pair Encoding (BPE) is employed to transform text into these tokens, and it comes with several advantageous features:

- It’s reversible and lossless, which means tokens can be converted back to the original text without losing any information.

- It works with any text, even if that text was not part of the tokenizer’s training data.

- It compresses the text: the sequence of tokens is shorter than the bytes representing the original text. In practice, each token typically corresponds to approximately 4 bytes.

- It tries to allow the model to recognize common subwords. For example, “ing” is a frequently occurring subword in English, so BPE encodings often split a word like “encoding” into tokens such as “encod” and “ing” (as opposed to, say, “enc” and “oding”). Seeing the “ing” token in various contexts aids models in generalizing and gaining a better grasp of grammar.

If you’re interested in delving deeper into the specifics of BPE, GPT tiktoken includes an educational submodule that is more accessible. It provides code that aids in visualizing the BPE process.

Extending GPT Tiktoken

If you’re interested in enhancing tiktoken to accommodate new encodings, there are two approaches you can use.

- You can construct your Encoding object to your exact specifications and distribute it as needed.

- You can employ the

tiktoken_extplugin mechanism to register your Encoding objects with tiktoken.

The latter is only useful if you need your encoding to be found by tiktoken.get_encoding; otherwise, the first option is recommended.

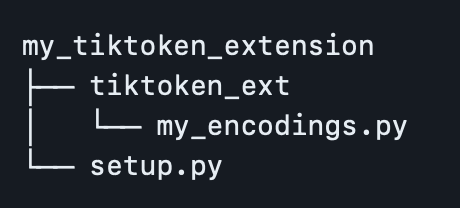

To achieve this, you must create a namespace package under tiktoken_ext. Arrange your project in the following way, making sure to exclude the tiktoken_ext/__init__.py file:

Your my_encodings.py should be a module that contains a variable named ENCODING_CONSTRUCTORS. This variable is a dictionary from an encoding name to a function that doesn’t require arguments and returns arguments that can be used to construct that encoding with tiktoken.Encoding. For an example, refer to tiktoken_ext/openai_public.py. For more specific details, you can check tiktoken/registry.py.

from setuptools import setup, find_namespace_packages

setup(

name=”my_tiktoken_extension”,

packages=find_namespace_packages(include=[‘tiktoken_ext*’]),

install_requires=[“tiktoken”],

…

)

After setting up, you just need to run the command pip install ./my_tiktoken_extension and your custom encodings should be ready to use! Remember not to use an editable installation.

Read more related articles: