OpenAI’s team recently announced a series of significant updates and enhancements to their platform, alongside more competitive pricing structures. Highlights from the announcement include:

- The introduction of the GPT-4 Turbo model, which boasts enhanced capabilities, cost-effectiveness, and a substantial 128K context window.

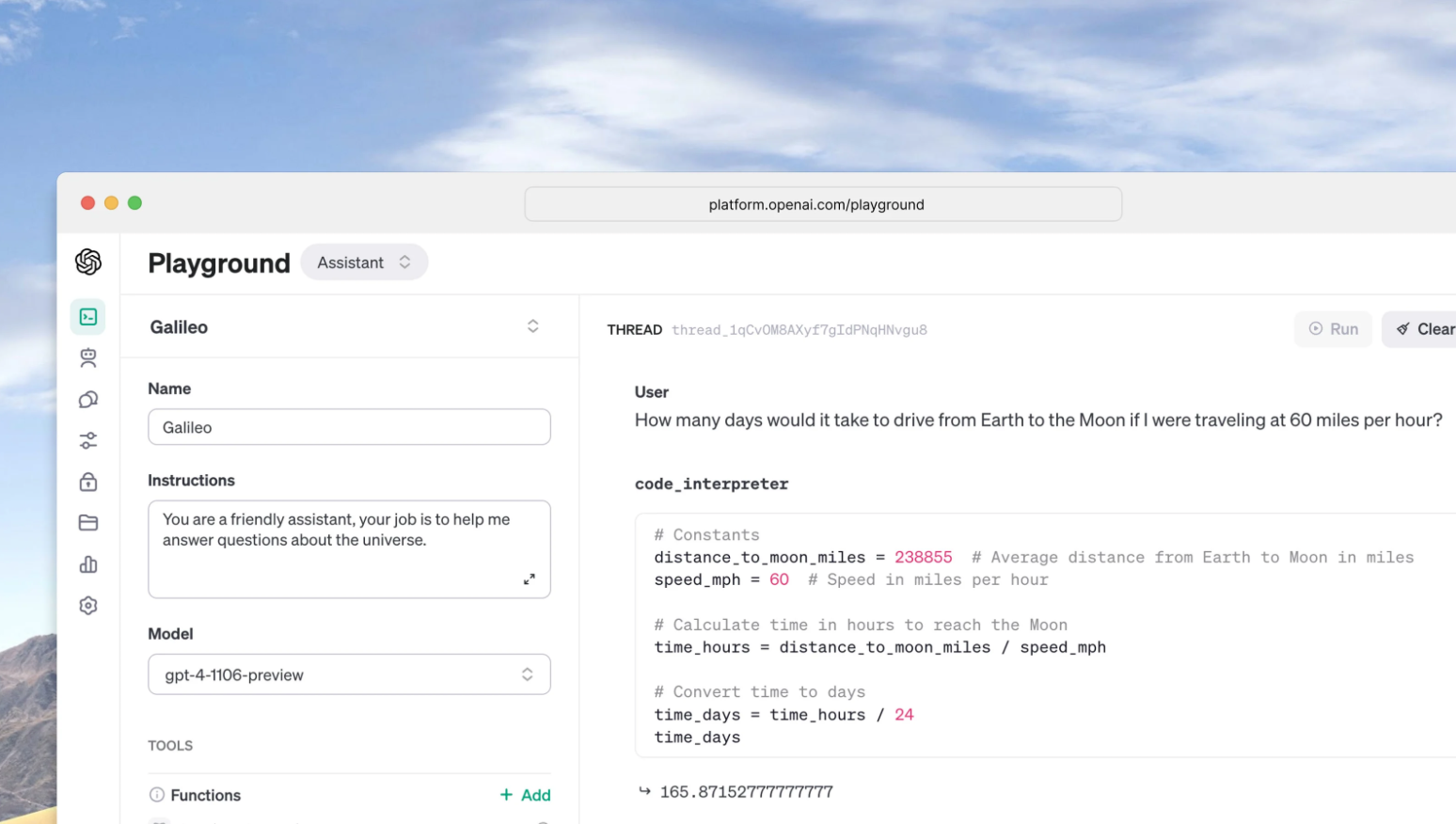

- The launch of a new Assistants API designed to simplify the process for developers to create AI-powered assistive applications that can set objectives and utilize models and tools.

- The unveiling of new multimodal features within the platform, encompassing vision, image generation with DALL·E 3, and text-to-speech technology.

GPT-4 Turbo Model

The GPT-4 Turbo, an advancement from the initial GPT-4 released in March and made widely available in July, is now in preview. This iteration is not only more proficient, with up-to-date knowledge as recent as April 2023, but it also can process the equivalent of over 300 pages of text in one prompt. Efficiency enhancements have allowed for a reduction in cost, with GPT-4 Turbo being three times less expensive for input tokens and twice as affordable for output tokens compared to its predecessor. Developers with subscriptions can test GPT-4 Turbo by using a specific API call, and a stable, production-ready version is expected to be released shortly.

Function calling updates

Improvements in function calling are also part of the update. This feature allows applications or external APIs to describe their functions to the models, which can then intelligently generate a JSON object with the necessary arguments to execute those functions. Now, it is possible to request multiple functions in a single message, streamlining interactions that would have previously required several exchanges with the model.

Improved JSON mode

GPT-4 Turbo has shown superior performance in tasks that demand precise adherence to instructions, such as generating content in specific formats. It also introduces a JSON mode, ensuring responses in valid JSON, which is particularly beneficial for developers who need to generate JSON in the Chat Completions API outside of function calling.

New Seed Parameter

Another innovative feature is the seed parameter, which allows for reproducible outputs, granting developers greater control over model behavior and facilitating debugging, unit testing, and consistent model interactions. OpenAI has already been utilizing this feature internally with great success.

Model customization

OpenAI has initiated an experimental program for fine-tuning GPT-4, although the enhancements over the base model are not as pronounced as those seen with GPT-3.5. The fine-tuning process for GPT-4 is still under development, with a focus on enhancing quality and safety. As these improvements are made, developers who are actively engaged in fine-tuning GPT-3.5 will be given the opportunity to apply for the GPT-4 fine-tuning program directly within fine-tuning console.

In addition to fine-tuning, OpenAI is introducing a Custom Models program aimed at organizations requiring a higher level of customization than what fine-tuning offers. This is particularly relevant for domains with vast proprietary datasets, on the scale of billions of tokens. Participating organizations will collaborate closely with OpenAI researchers to develop a GPT-4 model tailored to their specific needs. This comprehensive customization extends to every phase of the model training process, including domain-specific pre-training and a bespoke reinforcement learning (RL) post-training regimen.

Organizations that engage in this program will have exclusive rights to their custom models. True to OpenAI’s privacy commitments, these custom models will not be made available to other customers, nor will they contribute to the training of subsequent models. Furthermore, any proprietary data provided to OpenAI for the training of these models will be strictly used for that purpose alone and not repurposed elsewhere.

Given the resources and expertise required for such tailored development, the Custom Models program will initially be both exclusive and costly. Organizations with an interest in this high level of customization are invited to apply through the designated channel provided by OpenAI.

Conclusion

In the coming weeks, OpenAI plans to release a feature that provides log probabilities for the most likely output tokens from GPT-4 Turbo and GPT-3.5 Turbo, aiding in the development of functionalities like autocomplete in search experiences.

Furthermore, an updated version of GPT-3.5 Turbo has been released, now with a default 16K context window and enhanced capabilities similar to GPT-4 Turbo, including improved instruction following, JSON mode, and parallel function calling. This new model can be accessed via a specific API call, and applications currently using the older model name will be automatically upgraded on a specified date. The previous models will remain accessible until mid-2024 for those who need them.

Read related articles: